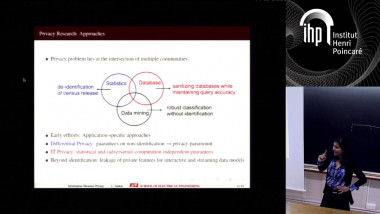

The Price of Privacy in High-Dimensional Statistics

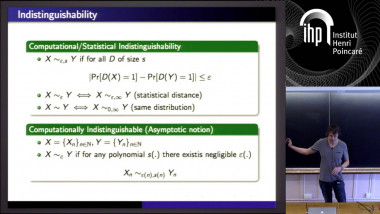

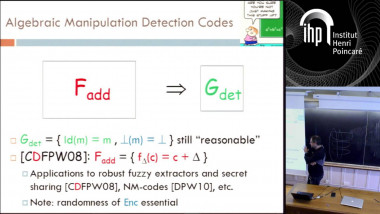

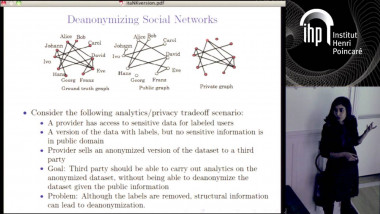

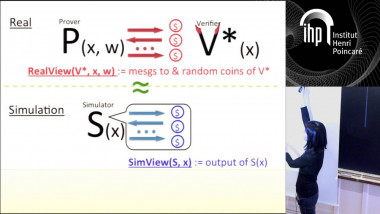

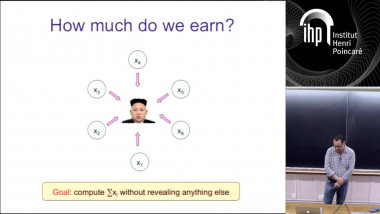

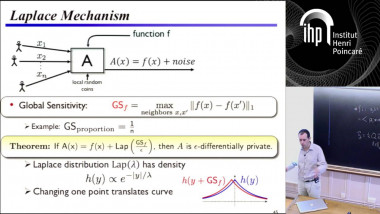

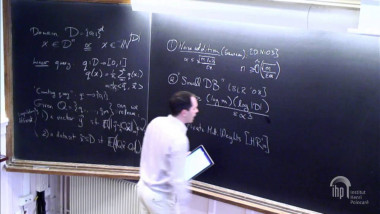

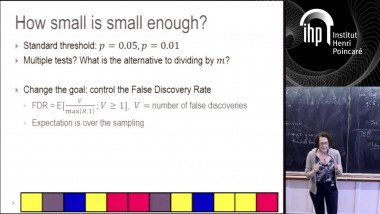

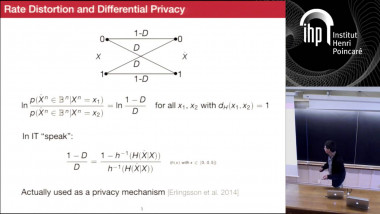

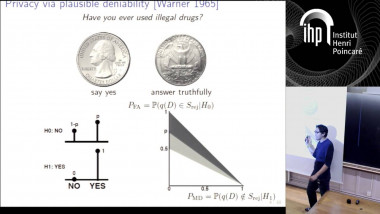

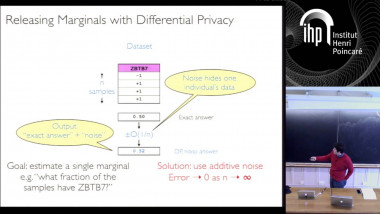

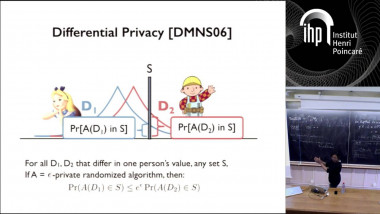

I will present some new, nearly-optimal lower bounds on the amount of data required to release differentially private statistics on high-dimensional datasets, both in information-theoretic and computational settings. These results show that there is a significant “price of differential privacy” in high-dimensional datasets. We prove these lower bounds using two closely-related cryptographic primitives fingerprinting codes (in information theoretic setting) and traitor-tracing schemes (in the computational setting) that we show are closely connected to differentially private data analysis. I will also discuss how these lower bounds are related to realistic attacks on released datasets.