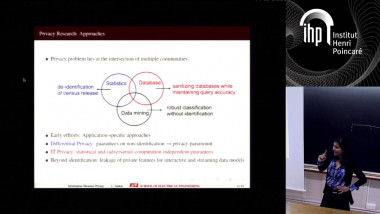

Privacy-preserving Analysis of Correlated data

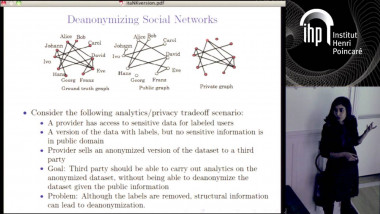

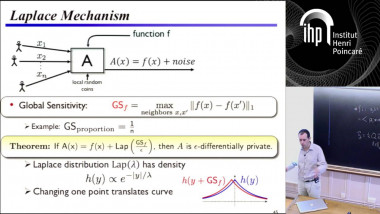

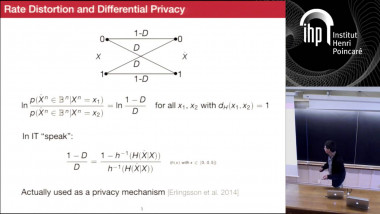

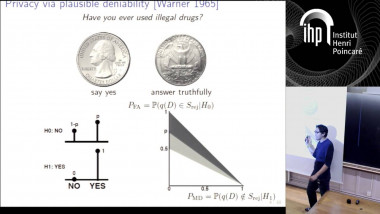

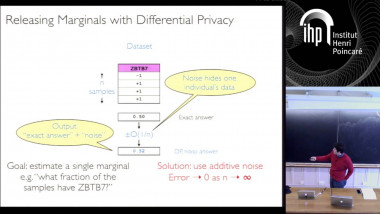

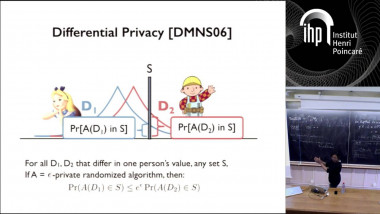

Many modern machine learning applications involve private and sensitive data that are highly correlated. Examples are mining of time series of physical activity measurements, or mining user data from social networks. Unfortunately, differential privacy, the standard notion of privacy in statistical databases, does not apply directly to such data, and as a result, there is a need for privacy mechanisms that work on correlated data and can still provide privacy. In this talk, we consider Pufferfish, a generalization of differential privacy, that applies to correlated data. We provide novel privacy mechanisms for Pufferfish, and establish performance guarantees on them. Finally we look at a case study – analyzing aggregate statistics of a time series of physical activity measurements – with Pufferfish privacy.