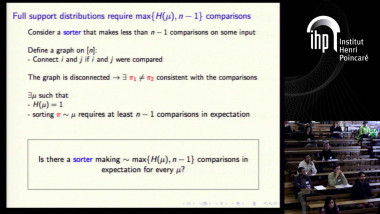

The information theoretic lower bound for comparison based sorting is (almost) tight, even when there is an arbitrary known distribution on the input array

By Shay Moran

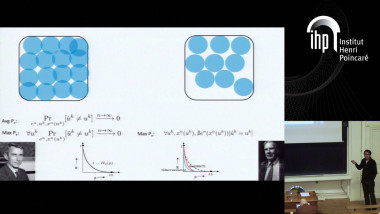

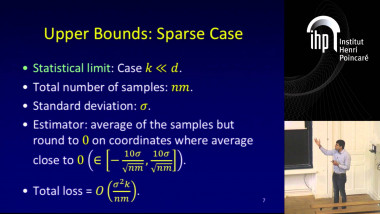

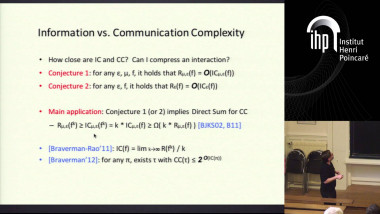

I will discuss the classical problem of sorting n elements when the input array is drawn from an arbitrary known distribution and the goal is to minimize the expected number of comparisons. I will present a simple variant of insertion sort which provides tight upper bound: If μ is a distribution on permutations on n elements, then one may sort inputs from μ with expected number of comparisons that is at most H(μ)+2n, where H is the entropy function. The algorithm uses less comparisons for more probable inputs: For every permutation π, the algorithm sorts π by using at most log_2(1/Pr_μ(π))+2n comparisons. A lower bound on the expected number of comparisons of H(μ) always holds, and a linear dependence on n is also required. I will also suggest directions for further research. The talk is based on a joint work with Amir Yehudayoff.