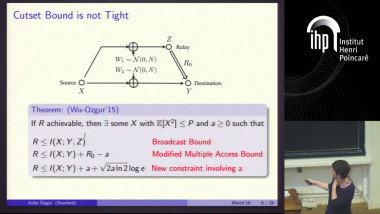

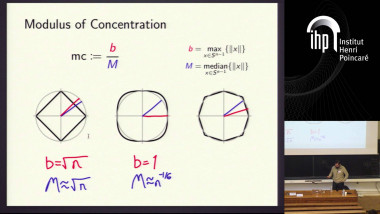

Improving on the cutset bound via a measure concentration

By Ayfer Özgür

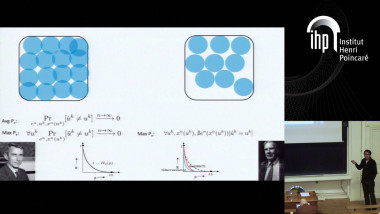

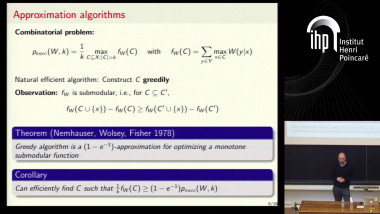

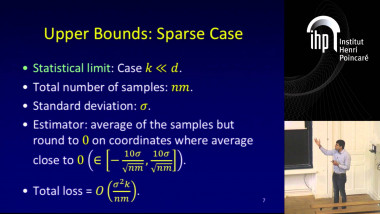

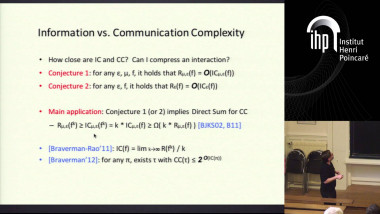

The cut-set bound developed by Cover and El Gamal in 1979 has since remained the best known upper bound on the capacity of the Gaussian relay channel. We develop a new upper bound on the capacity of the Gaussian primitive relay channel which is tighter than the cut-set bound. Combined with a simple tensorization argument proposed by Courtade and Ozgur in 2015, our result also implies that the current capacity approximations for Gaussian relay networks, which have linear gap to the cut-set bound in the number of nodes, are order-optimal and leads to a lower bound on the preconstant. The more important contribution of this talk is to propose a new converse technique which significantly differs from existing approaches in the literature and can be potentially useful for other multi-user problems. In general, proving an upper bound on the capacity of a multi-user channel involves dealing with information inequalities between the random variables induced by the reliable code and the channel structure. To prove such information inequalities, in this talk we use typicality arguments and concentration of measure. We believe the talk can be of significant interest to workshop participants as it proposes a new way to prove information inequalities and a new application of concentration of measure inequalities in information-theoretic problems, besides traditional applications such as proving strong converses for multi-user problems.