A Computable Measure of Suboptimality for Entropy-Regularised Variational Objectives

By Anna Korba

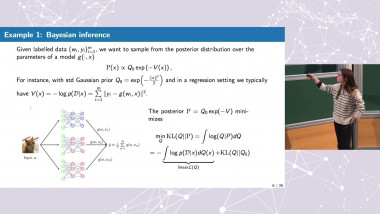

Asymptotic Theory of Iterated Empirical Risk Minimization, with Applications to Active Learning

By Hugo Cui

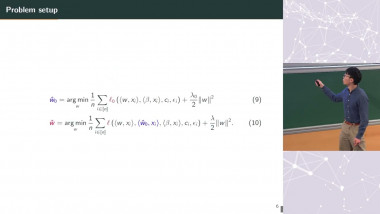

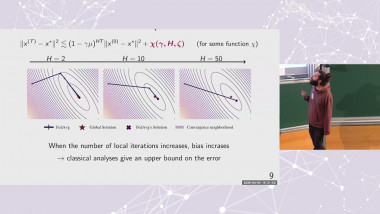

Convergence and Linear Speed-Up in Stochastic Federated Learning

By Paul Mangold

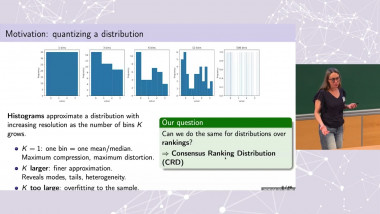

Beyond Kemeny Medians: Consensus Ranking Distributions Definition, Properties and Statistical Learning

By Ekhine Irurozki