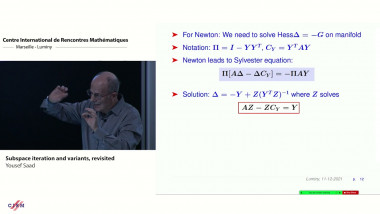

Subspace iteration and variants, revisited

By Yousef Saad

Computing invariant subspaces is at the core of many applications, from machine learning to signal processing, and control theory, to name just a few examples. Often one wishes to com- pute the subspace associated with eigenvalues located at one end of the spectrum, i.e., either the largest or the smallest eigenvalues. In addition, it is quite common that the data at hand undergoes frequent changes and one is required to keep updating or tracking the target invariant subspace. The talk will present standard tools for computing invariant subspaces, with a focus on methods that do not require solving linear systems. One of the best known techniques for computing invariant subspaces is the subspace iteration algorithm [2]. While this algorithm tends to be slower than a Krylov subspace approach such as the Lanczos algorithm, it has many attributes that make it the method of choice in many applications. One of these attributes is its tolerance of changes in the matrix. An alternative framework that will be emphasized is that of Grassmann manifolds [1]. We will derive gradient-type methods and show the many connections that exist between different viewpoints adopted by practitioners, e.g., the TraceMin algorithm [3]. The talk will end with a few illustrative examples.

![[1251] The Kakeya conjecture in $\mathbb{R}^3$](/media/cache/video_light/uploads/video/SeminaireBourbaki.jpg)