Nonnegative matrix factorisation with the beta-divergence for robust hyperspectral unmixing

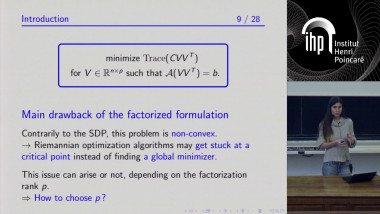

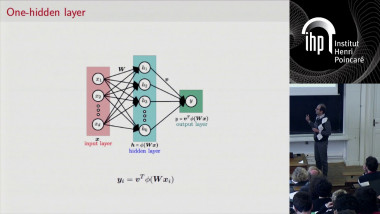

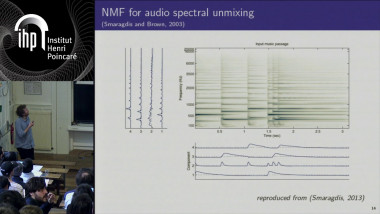

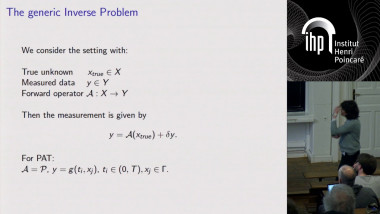

Data is often available in matrix form, in which columns are samples, and processing of such data often entails finding an approximate factorisation of the matrix into two factors. The first factor (the “dictionary”) yields recurring patterns characteristic of the data. The second factor (“the activation matrix”) describes in which proportions each data sample is made of these patterns. Nonnegative matrix factorisation (NMF) is a popular technique for analysing data with nonnegative values, with applications in many areas such as in text information retrieval, user recommendation, audio signal processing, and hyperspectral imaging. In a first part, the presentation will present a general majorisation-minimisation framework for NMF with the beta-divergence, a continuous family of loss functions that takes the quadratic loss, KL divergence and Itakura-Saito divergence as special cases. Secondly, I will present applications for hyperspectral unmixing in remote sensing and factor analysis in dynamic PET, introducing robust variants of NMF that account for outliers, nonlinear phenomena or specific binding. Joint work with Nicolas Dobigeon.