Appears in collection : A Multiscale tour of Harmonic Analysis and Machine Learning - To Celebrate Stéphane Mallat's 60th birthday

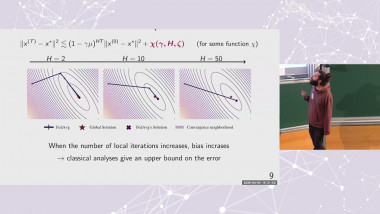

The human brain still largely outperforms robotic algorithms in most tasks, using computational elements 7 orders of magnitude slower than their artificial counterparts. Similarly, current large-scale machine learning algorithms require millions of examples and close proximity to power plants, compared to the brain's few examples and 20W consumption. We study how modern nonlinear systems tools, such as contraction analysis, virtual dynamical systems, and adaptive nonlinear control can yield quantifiable insights about collective computation and learning in large dynamical networks. For instance, we show how stable implicit sparse regularization can be exploited in adaptive prediction or control to select relevant dynamic models out of plausible physically-based candidates, and how most elementary results on gradient descent based on convexity can be replaced by much more general results based on Riemannian contraction.