Optimal vector quantization: from signal processing to clustering and numerical probability

By Gilles Pagès

Appears in collections : CEMRACS - Summer school: Numerical methods for stochastic models: control, uncertainty quantification, mean-field / CEMRACS - École d'été : Méthodes numériques pour équations stochastiques : contrôle, incertitude, champ moyen, Ecoles de recherche

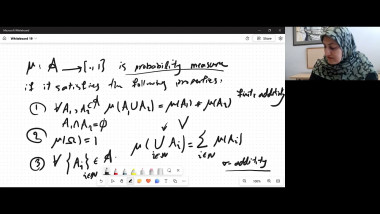

Optimal vector quantization has been originally introduced in Signal processing as a discretization method of random signals, leading to an optimal trade-off between the speed of transmission and the quality of the transmitted signal. In machine learning, similar methods applied to a dataset are the historical core of unsupervised classification methods known as “clustering”. In both case it appears as an optimal way to produce a set of weighted prototypes (or codebook) which makes up a kind of skeleton of a dataset, a signal and more generally, from a mathematical point of view, of a probability distribution. Quantization has encountered in recent years a renewed interest in various application fields like automatic classification, learning algorithms, optimal stopping and stochastic control, Backward SDEs and more generally numerical probability. In all these various applications, practical implementation of such clustering/quantization methods more or less rely on two procedures (and their countless variants): the Competitive Learning Vector Quantization $(CLV Q)$ which appears as a stochastic gradient descent derived from the so-called distortion potential and the (randomized) Lloyd's procedure (also known as k- means algorithm, nu ees dynamiques) which is but a fixed point search procedure. Batch version of those procedures can also be implemented when dealing with a dataset (or more generally a discrete distribution). In a more formal form, if is probability distribution on an Euclidean space $\mathbb{R}^d$, the optimal quantization problem at level $N$ boils down to exhibiting an $N$-tuple $(x_{1}^{²}, . . . , x_{N}^{²})$, solution to

argmin$_{(x1,\dotsb,x_N)\epsilon(\mathbb{R}^d)^N} \int_{\mathbb{R}^d 1\le i\le N} \min |x_i-\xi|^2 \mu(d\xi)$

and its distribution i.e. the weights $(\mu(C(x_{i}^{²}))_{1\le i\le N}$ where $(C(x_{i}^{²})$ is a (Borel) partition of $\mathbb{R}^d$ satisfying

$C(x_{i}^{²})\subset \lbrace\xi\epsilon\mathbb{R}^d :|x_{i}^{²} -\xi|\le_{1\le j\le N} \min |x_{j}^{²}-\xi|\rbrace$.

To produce an unsupervised classification (or clustering) of a (large) dataset $(\xi_k)_{1\le k\le n}$, one considers its empirical measure

$\mu=\frac{1}{n}\sum_{k=1}^{n}\delta_{\xi k}$

whereas in numerical probability $\mu = \mathcal{L}(X)$ where $X$ is an $\mathbb{R}^d$-valued simulatable random vector. In both situations, $CLV Q$ and Lloyd's procedures rely on massive sampling of the distribution $\mu$. As for clustering, the classification into $N$ clusters is produced by the partition of the dataset induced by the Voronoi cells $C(x_{i}^{²}), i = 1, \dotsb, N$ of the optimal quantizer. In this second case, which is of interest for solving non linear problems like Optimal stopping problems (variational inequalities in terms of PDEs) or Stochastic control problems (HJB equations) in medium dimensions, the idea is to produce a quantization tree optimally fitting the dynamics of (a time discretization) of the underlying structure process. We will explore (briefly) this vast panorama with a focus on the algorithmic aspects where few theoretical results coexist with many heuristics in a burgeoning literature. We will present few simulations in two dimensions.