Embedding of Low-Dimensional Attractor Manifolds by Neural Networks

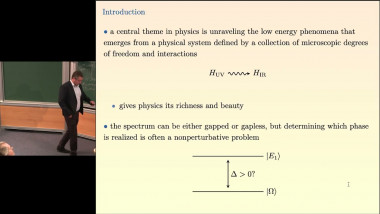

Recurrent neural networks (RNN) have long been studied to explain how fixed-point attractors may emerge from noisy, high-dimensional dynamics. Recently, computational neuroscientists have devoted sustained efforts to understand how RNN could embed attractor manifolds of finite dimension, in particular in the context of the representation of space by mammals. A natural issue is the existence of a trade-off between the quantity (number) and the quality (accuracy of encoding) of the stored manifolds. I will here study how to learn the N2 pairwise interactions in a RNN with N neurons to embed L manifolds of dimension D<<N. The capacity, i.e., the maximal ratio L/N, decreases as ~ [log(1/e)]^-D, where e is the error on the position encoded by the neural activity along each manifold. These results derived using a combination of analytical tools from statistical mechanics and random matrix theory show that RNN are flexible memory devices capable of storing a large number of manifolds at high spatial resolution.