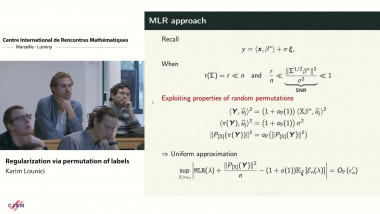

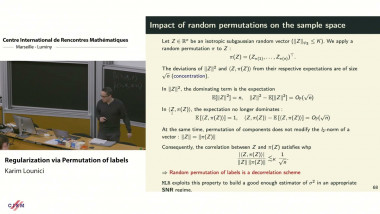

Regularization via permutation of labels - Lecture 1

A novel regularization technique based on random permutations of labels was proposed recently to tune hyper-parameters. Although this technique was successfully used in practice to train neural networks, little is known about its theoretical properties. This talk will present several results toward the theoretical understanding of this permutation technique in the simple Ridge regression model. These results combine a statistical analysis of the impact of random permutations on the geometry of the model with several novel perturbations bounds on the spectral decomposition of empirical covariance operators which can be of interest in themselves. Interestingly, this technique allows to build a novel smooth in-sample estimator of the excess risk which can be used to efficiently optimize the continuous hyper-parameters of regular models via gradient descent. This presentation is based in part on joint work with K. Meziani, G. Pacreau and B. Riu.