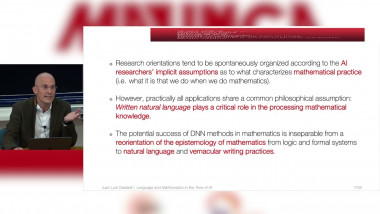

Language and Mathematics in the Time of AI. Philosophical and Theoretical Perspectives

De Juan Luis Gastaldi

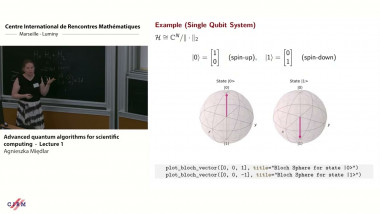

Advanced quantum algorithms for scientific computing - lecture 1

De Agnieszka Międlar

De Yousef Saad

Apparaît dans la collection : Numerical Linear Algebra / Algèbre Linéaire Numérique

In an era where Artificial Intelligence (AI) is permeating virtuallly every single field of science and engineering, it is becoming critical to members of the numerical linear algebra community to understand and embrace AI , and to contribute to its advancement, and more broadly to the advancement of machine learning. What is fascinating and rather encouraging is that Numerical Linear Algebra (NLA) is at the core of machine learning and AI. In this talk we will give an overview of Deep Learning with an emphasis on Large Language Models (LLMs) and Transformers [3, 4]. The very first step of LLMs is to convert the problem into one that can he exploited by numerical methods, or to be more accurate, by optimization techniques. All AI methods rely almost entirely on essentially 4 ingredients: data, optimization methods, statistical intuition, and linear algebra. Thus, the first task is to map words or sentences into tokens which are then imbedded into Euclidean spaces. From there on, the models refer to vectors and matrices. We will show a few examples of important developments in ML, that were heavily based on linear algebra ideas. Among these, we will briefly discuss LoRa [1] a technique in which low-rank approximation was used to reduce computational cost in some models, leading to gains of a few orders of magnitude. Another contribution that used purely algebraic arguments and that had a major impact on LLMs is the article [2]. Here the main discovery is that the nonlinear ""self-attention"" in LLMs can be approximated linearly, resulting in huge savings in computations, as the computational complexity was decreased from $O\left(n^2\right)$ to $O(n)$.The talk will be mostly a survey of known recent methods in AI with the primary goal of unraveling the mathematics of Transformers. A secondary goal is to initiate a discussion on the issue of how NLA specialitst can participate in AI research.